On Wednesday, a survey of 700 software engineering leaders across five countries found that AI coding tools have transformed their work faster than the industry's measurement frameworks can track — and that 94% say the costs accumulating in blind spots like tech debt, validation time, and developer burnout are missing from their metrics entirely. The report, The State of Engineering Excellence 2026, was published by a company called Harness. The coincidence of its name is not lost on practitioners: across engineering teams at OpenAI, Anthropic, Google, Meta, and beyond, a consensus has quietly crystallized around a new discipline — also called harness engineering — that holds that the most important lever in AI development has shifted away from the model itself and toward the system built around it.

The Arc: Four Paradigms in Three Years

The lineage spans four roughly two-year-long eras. Prompt Engineering (late 2022 to mid-2023) treated a single instruction as the entire program. Context Engineering (mid-2023 to mid-2024) treated the context window as a workspace to be curated with retrieval, memory, and tool definitions. Agent Engineering (mid-2024 to mid-2025) handed the loop itself over to the model, letting it reason, act, and observe on its own.

Each transition was driven by a combination of model capability jumps, exposed engineering pain points, and commercial pressure — not by any single vendor's roadmap. Harness Engineering is the natural fourth step, and the one in which the engineering lever finally moves outside the model entirely.

The name itself was attributed by secondary sources to HashiCorp co-founder Mitchell Hashimoto, who defined the core principle in a blog post in early February 2026: "Anytime you find an agent makes a mistake, you take the time to engineer a solution such that the agent never makes that mistake again." The term was given formal definition through an OpenAI post by Ryan Lopopolo, published on February 11, 2026, built on the experience of shipping a production application with zero human-written code.

A Quiet Reframing of the Engineering Lever

Throughout the prompt, context, and agent engineering eras, the dominant assumption was that the model itself was the determining variable. Teams optimized around the next model release. By late 2025, that assumption had quietly collapsed.

The performance gap between frontier models from different labs had narrowed substantially, while the gap between the same model with and without a well-designed harness widened dramatically. Cursor and OpenAI's Codex CLI run on overlapping model substrates yet deliver dramatically different developer experiences. Anthropic's Claude Code swaps model weights between versions, but users overwhelmingly cite harness features — not model upgrades — as the more impactful changes.

The February 2026 moment made this visible in extreme form. Lopopolo's team at OpenAI's Frontier Product Exploration group ran a five-month experiment: building and shipping an internal beta product with zero manually written code. The codebase grew to over one million lines of code, spread across more than 1,500 pull requests — all authored, reviewed, and merged by AI agents. The engineers' own verdict: the discipline showed up more in the scaffolding than in the code itself.

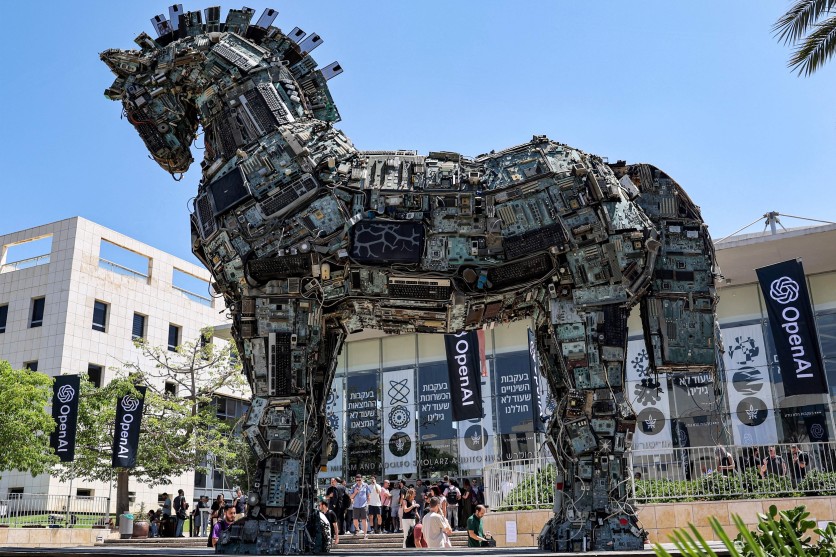

The metaphor behind the word is deliberate. A harness, in the equestrian sense, is the complete set of equipment for channeling a powerful but unpredictable animal in the right direction. The horse is the model — powerful and fast, but directionless without the harness.

What the Harness Contains

A complete harness, practitioners now broadly agree, includes several load-bearing elements: project-level context guides (such as AGENTS.md, CLAUDE.md, or SKILL.md files); tools wired through standards like the Model Context Protocol (MCP); sensors and verifiers that let the agent check its own work; permission boundaries and execution modes; reusable skills and hooks; sub-agent orchestration; full observability; and garbage collection to fight codebase entropy.

One widely cited formulation reduces this to a simple architectural equation: Agent = Model + Harness. The model functions as a stateless reasoning engine; the harness is the runtime software infrastructure that coordinates tool dispatch, context management, and safety enforcement. Enterprise data analysis suggests that as many as 65% of AI agent failures trace back to harness defects — context drift, schema misalignment, and state degradation — rather than to model limitations.

None of this replaces traditional software engineering. It moves the discipline upward. Quality gates, CI/CD, SRE practices, and infrastructure-as-code are now enforced at agent runtime, not at human commit time.

A Morning Report, an Accidental Proof

The irony of today's news is hard to miss. This morning, a company literally called Harness — the AI Software Delivery Platform — released The State of Engineering Excellence 2026, a study of 700 engineering practitioners and managers across the US, UK, India, France, and Germany. Its headline finding: AI adoption is now the default in engineering organizations, and self-reported productivity gains are overwhelmingly positive — but the costs are accumulating in places organizations aren't watching.

The report tells a complicated story: organizations are reporting record productivity gains while simultaneously acknowledging they no longer have the right instruments to tell whether those gains are real — or what they're costing. Specifically, 89% of leaders say their current metrics accurately reflect AI's impact, yet 94% say key factors — including tech debt, validation time, and developer burnout — are missing from those same metrics. And only 6% believe the frameworks they have today can fix it.

The report's top recommended remedy: treat AI performance as its own discipline — track AI agent accuracy, acceptance, and cost separately from human developer output, with a shared definition of "good" across the organization. That is, in a sentence, a description of harness engineering.

"AI coding is the first technology shift in modern software that has changed not just what developers build, but how they spend their hours," said Trevor Stuart, SVP and General Manager at Harness. "Cloud and the internet were infrastructure revolutions layered underneath the developer. AI is reshaping the developer's job entirely, and the measurement frameworks that the industry has relied on for the past decade weren't built for this new unit of work."

Why the Timing Is Not Accidental

Industry data points to a sharp inflection across multiple dimensions simultaneously.

On the capability side, METR — a nonprofit focused on evaluating AI capabilities — estimated in February 2026 that Claude Opus 4.6 has a 50%-time-horizon of around 14.5 hours on software tasks, meaning the model can reliably complete tasks that would take a skilled human expert the better part of two workdays. As recently as mid-2024, frontier models had time horizons measured in single-digit minutes. The trend line shows a doubling roughly every four months.

On the commercial side, Claude Code reached $1 billion in annualized revenue within six months of its May 2025 public launch — and had grown to over $2.5 billion in run-rate revenue by February 2026. The MCP protocol's combined Python and TypeScript SDKs surpassed 97 million monthly downloads by March 2026, up from roughly 100,000 at launch in late 2024. Every major AI provider now ships MCP-compatible tooling.

On the operational side, Microsoft's Azure SRE Agent, which reached general availability in April 2026, automates multi-layer investigation and root-cause analysis across applications, platform, and infrastructure — reducing mean time to resolution and on-call load at scale.

And yet, by the Cloud Security Alliance's estimate, 88% of enterprise agent projects still stall before production. The gap between what agents can technically do and what enterprises can actually deploy is precisely the gap that harness engineering exists to close.

Research published this week reinforces the point from an academic direction. A study from Stanford and Tsinghua University found that the same underlying model can produce performance gaps of up to 6x depending on how the agent wrapper — the harness — is designed. The model stayed constant; only the scaffolding changed. Outcomes ranged from nearly useless to near-human performance on complex multi-step tasks.

"Enterprises don't want a smart but uncontrollable demo," one engineer involved in early harness deployments noted. "They want an agent that is auditable, governable, and insurable. That requires a harness."

Red Hat's developer blog echoed this in April 2026: "The AI writes better code when you design the environment it works in."

A Paradigm Crystallizing in Real Time

What makes Harness Engineering unusual as a discipline is that its best practices are emerging faster than they can be codified. The infrastructure — Claude Code, Codex CLI, MCP, Google's Agent2Agent Protocol — was already deployed at scale by the time the field agreed on what to call it.

A Q1 2026 maturity matrix developed by practitioners captures the underlying principle: "When AI writes the code, the craft shifts to designing the system around it." The matrix spans ten dimensions, from observability to orchestration, with a key organizing insight: if information is available to humans but not to agents, the harness has a hole in it.

The OpenAI Frontier team's published playbook translates this into engineering advice that is already circulating widely: structure your repository so agents can navigate it; use progressive disclosure rather than dumping massive instruction files into context; run automated cleanup agents because AI-generated code accumulates entropy faster than human-written code; keep planning documents inside the codebase, not in tools the agent will never see. All of it rests on the same foundation — the harness, not the model, is where engineering judgment now lives.

For an industry that spent three years debating whether AI agents were ready for production, the answer in 2026 has become something more precise: agents are ready — once the harness is.

ⓒ 2026 TECHTIMES.com All rights reserved. Do not reproduce without permission.