OpenHuman, an open-source desktop AI agent built by the developer collective tinyhumansai, climbed GitHub Trending in May 2026 by promising something its two dominant rivals do not: context about the user on day one, before a single prompt is typed. For anyone evaluating AI agents for personal or professional use right now, that promise carries a significant caveat — the agent achieves that context by requesting continuous OAuth access to email, code repositories, calendar, chat history, and payment tooling simultaneously. The week it went trending, the broader agent market was already absorbing the fallout from Cisco's finding that OpenClaw, the category leader, was "from a security perspective, an absolute nightmare" — a documented record that makes any new entrant asking for the same breadth of access worth scrutinizing carefully.

Hermes Displaced OpenClaw as the Most-Used Agent the Day Before OpenHuman Appeared

Through the first half of 2026, the open-source agent landscape was defined by two projects. OpenClaw, the viral self-hosted assistant built by Austrian developer Peter Steinberger and recognised by its lobster mascot, accumulated 372,000 GitHub stars and built its lead through breadth: plugin support for nearly any stack, connections to more than 50 messaging platforms, and a community skill marketplace. Hermes Agent, from Nous Research, took a different approach — depth over breadth, with a closed learning loop that rewrites its own skill files after complex tasks and competes on 153,000 GitHub stars.

On May 10, 2026, Hermes crossed a specific threshold: it processed 224 billion tokens in a single day on OpenRouter's global inference platform, overtaking OpenClaw's 186 billion for the first time since OpenClaw launched in late 2025. A TechTimes report published May 15 documented that milestone, noting that OpenClaw still leads the cumulative all-time chart but that the daily figure is the leading indicator of where developers are placing new workloads. OpenHuman's appearance on Trending the following day placed it in an already contested market — and in the same week that OpenClaw's security record had become a liability its backers were actively defending.

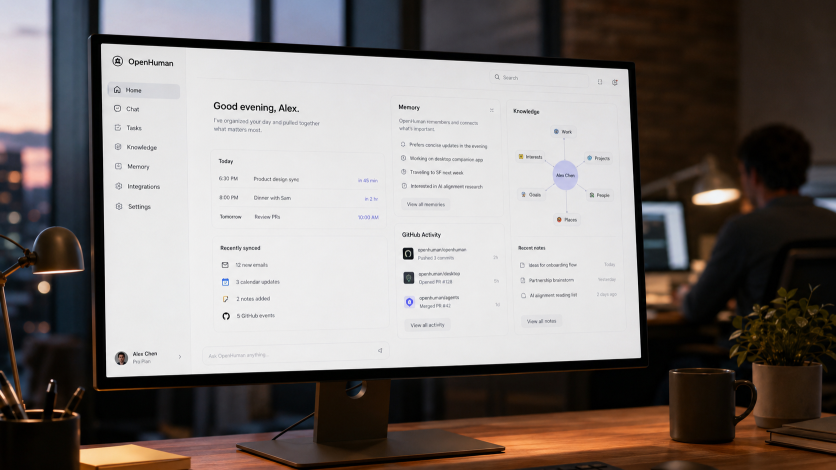

How OpenHuman Builds Its Picture of You

OpenHuman, released at v0.53.43 on May 13, 2026, rejects both rival models. Its README states directly: "Most agents start cold. Hermes learns by watching you work; OpenClaw waits for plugins to ferry context in." The counter-claim is that the agent, not the user, should do the work of building context — and that it should do so in minutes, not weeks.

The architecture runs a three-stage pipeline. First, connection: OpenHuman supports more than 118 third-party services — including Gmail, GitHub, Slack, Notion, Stripe, Google Calendar, Google Drive, Linear, and Jira — via one-click OAuth, requiring no manual API key configuration. Second, fetch: every 20 minutes, the agent polls every connected account and pulls new email, calendar events, code commits, and document edits to the local machine autonomously. Third, memory: incoming data passes through a deterministic pipeline that converts it to Markdown, chunks it at roughly 3,000 tokens, scores it, and builds what the project calls a Memory Tree — a hierarchical summary structure stored in a local SQLite database and simultaneously written as Markdown files compatible with the note-taking application Obsidian.

The inspectability of that memory layer is the design decision that distinguishes OpenHuman from embedding-based agents: a user can open, read, and edit the agent's knowledge directly as plain files. The project draws explicit inspiration from Andrej Karpathy's concept of a manually maintained "LLM wiki" — a structured Markdown knowledge base an AI can index — and automates that process end to end.

A separate compression layer the project calls TokenJuice converts HTML to Markdown, strips non-ASCII noise, shortens URLs, and de-duplicates content before it reaches the model. The project claims this reduces token consumption by up to 80%. OpenHuman also routes tasks across models automatically — sending reasoning-heavy work to a frontier model, routine tasks to a cheaper one, and image-related work to a vision model — and supports local inference through Ollama and LM Studio.

The Features That Justify the Marketing

On top of the memory layer, OpenHuman adds capabilities that produce its headline claims. A desktop mascot can join a Google Meet as a separate participant, listen to the call, transcribe notes into the Memory Tree, and speak back into the meeting. A "subconscious loop" runs without user interaction: the agent reads outstanding to-dos and recent memory and decides what actions to take next. Additional features include screen reading, inline keyboard autocomplete drawn from the Memory Tree, and voice input and output. The combined effect the project markets is an agent that behaves as though it has known the user for months, from the first session.

One App, Continuous Access to Everything — The Privacy Trade-Off That Defines the Design

The privacy surface that makes OpenHuman's value proposition possible is also its most consequential risk factor. An independent review by KnightLi, published May 15, 2026, raised specific concerns about the install path and the breadth of permissions the application requests. On macOS and Linux, installation is triggered by a single piped shell command. As the KnightLi analysis notes: "If this is your daily primary machine, it is better to download the installer from the official site first, or at least open and inspect the install script before deciding whether to execute a remote script directly."

The piped-shell install method is a recognised supply-chain risk vector: a user who runs the command without inspecting the script grants immediate execution privileges to remotely hosted code. OpenHuman is licensed under GPL-3.0 and its source is auditable, but the install path means most users will not audit it. That risk is not theoretical: the TechTimes report on the OpenClaw market detailed how Cisco's AI Threat and Security Research team found that a single malicious OpenClaw skill, distributed through its community marketplace, deployed credential-stealing macOS malware and exposed tens of thousands of users' browser credentials and cryptocurrency wallets. OpenHuman has no community skill marketplace yet — but the same install-path and aggregation-risk pattern applies from day one.

The deeper structural concern is aggregation. An agent that holds continuous OAuth tokens for a user's email, code, calendar, payments, and communications has assembled exactly the dataset that makes credential theft or local-storage compromise severe rather than merely inconvenient. OpenHuman's architecture is explicitly local-first — data lives in on-device SQLite and Markdown, not a vendor cloud — and its documentation states that signing in does not grant ongoing access without per-integration approval. But local storage does not eliminate risk; it relocates it from a cloud provider to the user's own machine, where endpoint security varies widely.

No named security researcher has published a formal audit of OpenHuman's codebase as of this article's publication. That absence is a function of the project's age, not a clearance.

776 Stars Is a Fast Start — OpenClaw Reached 372,000

OpenHuman's current GitHub star count of 776 is rapid-start momentum rather than durable community scale. OpenClaw has accumulated 372,000 stars over a longer period, having crossed 100,000 within 48 hours of its January 2026 relaunch. Hermes Agent stands at 153,000. The gap between OpenHuman and either rival illustrates the difference between Trending-period velocity and sustained adoption.

OpenHuman's own documentation describes it as early-stage software, still under active development with rough edges expected. The current release, v0.53.43 (May 13, 2026), has not been independently stress-tested at scale. The 80% token-compression claim, the 20-minute sync reliability, and the Memory Tree's behaviour under large data volumes are all project assertions without third-party validation.

What This Means for People Choosing an Agent Today

The emergence of OpenHuman makes three things clear for anyone evaluating open-source agents in mid-2026. The market has moved from two dominant architectural models — breadth versus depth — to at least three, with context-first design now a distinct approach. The privacy trade-off embedded in context-first design is not incidental to how it works; it is the mechanism, and no amount of local-first architecture eliminates aggregation risk. A project with fewer than 800 stars and no independent security audit is not yet a production tool — it is a promising early-stage project that deserves scrutiny proportional to the access it requests.

For developers or professionals evaluating agents now: OpenHuman offers a genuinely different architectural premise backed by real engineering. But granting any single application simultaneous OAuth access to email, code, calendar, and payment accounts — however local the storage — warrants a security review before deployment in any professional context. The agent that claims to know you best also knows the most about you.

ⓒ 2026 TECHTIMES.com All rights reserved. Do not reproduce without permission.