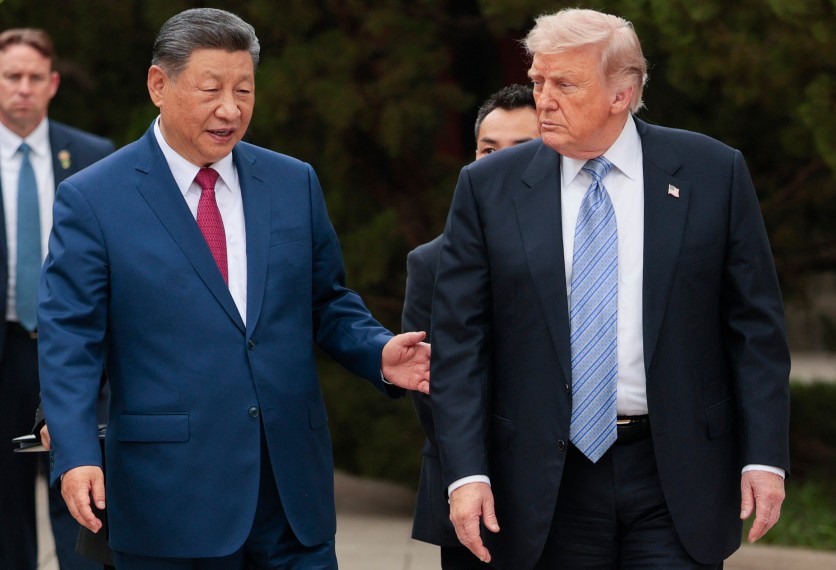

On May 15, 2026, returning from the first US presidential visit to China since 2017, Donald Trump told reporters aboard Air Force One that he and Xi Jinping had discussed "possibly working together for guardrails" on artificial intelligence — a statement that signals a potential shift in great-power AI diplomacy, even as it stops well short of any formal agreement. The admission landed days after Anthropic's Mythos model exposed tens of thousands of unpatched software vulnerabilities in critical global infrastructure, forcing both governments to confront a shared technological risk that no export control regime can contain alone.

For ordinary people, the stakes are immediate: the same AI systems now being discussed in diplomatic terms are the ones capable of finding and exploiting vulnerabilities in the hospitals, banks, and government networks that underpin daily life. Whether Washington and Beijing can agree on anything — let alone enforce it — will shape how those systems develop and who they endanger.

Trump Said "Guardrails." Beijing Said Nothing Specific. That Gap Is the Story.

Trump's comment was the headline, but its vagueness was the signal. When asked what kind of AI guardrails he had in mind, the president cited "the guardrails that we talk about all the time" — offering no mechanism, no timeline, and no named counterpart on the Chinese side. A White House official said before the summit that the administration wanted to "open up a conversation" about AI, but acknowledged the "formality" of any resulting channel was "yet to be determined."

Treasury Secretary Scott Bessent, speaking to CNBC during the Beijing summit, said the US could pursue AI talks with China "because we are in the lead" — a framing that treats safety dialogue as a by-product of competitive advantage rather than a shared governance priority.

Chris McGuire, a senior fellow for China and emerging technologies at the Council on Foreign Relations who led US-China AI policy at the National Security Council under President Biden, was direct about the asymmetry. In an analysis published May 13, McGuire wrote that Beijing "will not negotiate in good faith on AI safety" and that China's primary interest in any dialogue remains closing the gap on AI chip access, not reducing shared risk. Speaking after the summit at a CFR media briefing on May 15, McGuire noted the Biden administration had already run an AI dialogue with China in which "the Chinese indicated they weren't really interested in negotiating in good faith on shared risks. Their number-one priority is catching up to the United States in AI."

That friction is not theoretical. According to reporting by The Hill before the summit, McGuire said China had shown an unwillingness to "negotiate in good faith" in previous exchanges, with US technical experts focused on safety while Chinese counterparts focused on export controls.

Why Mythos Made This Summit Different

The immediate catalyst for bringing AI safety onto the summit agenda was not strategic calculation alone — it was a single model release. In early April 2026, Anthropic published a technical assessment of Mythos Preview, a frontier AI model it declined to release publicly on the grounds that it poses unprecedented cybersecurity risks. The model had located vulnerabilities in every major operating system and web browser, including a 27-year-old flaw in OpenBSD, and had demonstrated the ability to chain exploits autonomously — without requiring human expertise at each step.

Anthropic CEO Dario Amodei warned of a narrow window — roughly six to twelve months, by his estimate, before Chinese AI models reach comparable capabilities — in which governments and companies could patch the vulnerabilities Mythos identified. The model found nearly 300 flaws in the Firefox browser alone; an earlier Anthropic model had found roughly 20.

The concern reached the summit directly. Senior White House officials told reporters that "frontier AI models that expose vulnerabilities in national cybersecurity infrastructure" would be a critical talking point in Beijing, particularly in light of Mythos. Chinese state media had already flagged the model for its potential use in offensive cyber operations.

Anthropic's Selective Access Left Most Governments Exposed

Anthropic launched Project Glasswing to give approximately 40 major technology firms — including Amazon Web Services, Apple, Cisco, CrowdStrike, Google, JPMorgan Chase, Microsoft, and Nvidia — early access to Mythos for defensive scanning. The White House blocked a proposed expansion to roughly 70 additional organizations.

The practical result: most central banks and governments worldwide were excluded from the program, leaving their infrastructure exposed to vulnerabilities that a small set of US technology companies could already see and patch. Rest of World reported that AI-enabled cyberattacks increased by 89% in 2025, citing CrowdStrike data, while the global shortage of cybersecurity professionals deepens the risk.

Justin Herring, a partner at law firm Mayer Brown and former executive deputy superintendent for cybersecurity at New York's Department of Financial Services, framed the structural problem plainly: "You have a significant increase in the volume of vulnerabilities discovered, but they don't seem to have deployed a tool that helps you fix them. Vulnerability management is the great Sisyphean task of cybersecurity."

China's Own Models Display Documented Escalatory Bias

The risk runs in both directions. Researchers at the Center for Strategic and International Studies (CSIS) published analysis in February 2026 showing that China's Qwen2 model chose escalatory options in nearly 45% of foreign policy crisis scenarios — a benchmark built from 66,473 data points across realistic crisis simulations. DeepSeek, China's most advanced publicly available model, showed similar hawkish patterns, particularly in scenarios involving Western democracies.

In a May 12 commentary ahead of the summit, CSIS researchers Benjamin Jensen and Yasir Atalan argued that Trump and Xi should treat shared AI benchmarking as a central agenda item: "AI systems should not be treated as neutral tools when they are asked to advise policymakers on war and peace, absent testing and refinement." Jensen and Atalan did not argue against competition — they argued that models with dangerous strategic biases being integrated into government decision-support workflows pose risks to both sides simultaneously.

Hai Zhao, a director of international political studies at the Chinese Academy of Social Sciences, a state-affiliated think tank, told CNBC that an AI arms race would be "bad not just for both countries, but for all humanity" — and called for a global treaty governing military AI use.

The H200 Stalemate Shows the Limits of Diplomatic Momentum

Whatever political momentum the summit generated has not yet moved the physical infrastructure of the AI economy. On May 14, Reuters reported that the US had cleared approximately ten Chinese firms — including Alibaba, Tencent, ByteDance, and JD.com — to purchase Nvidia's H200 AI processors, with Lenovo and Foxconn approved as distributors. Not a single delivery has been made.

Beijing appears to be actively discouraging its companies from proceeding, partly in response to a US requirement that 25% of chip-sale revenues flow to the American treasury and that chips physically route through US territory before reaching China. Commerce Secretary Howard Lutnick told a Senate hearing that Beijing was keeping investment focused on domestic chip development. Before US export controls tightened, Nvidia commanded roughly 95% of China's advanced-chip market; that share has since fallen effectively to zero.

Nvidia CEO Jensen Huang, added to the presidential delegation at Trump's personal invitation and picked up in Alaska en route to Beijing, publicly expressed hope for a breakthrough. None was announced.

What Readers Can Do Now — and What Remains Unresolved

The gap between a US president saying "possibly working together for guardrails" and a functioning bilateral AI safety framework is substantial. The Biden administration opened a formal AI dialogue with China in 2023; it produced limited results. The current administration has no named structure, no agreed scope, and no timeline for follow-up conversations.

For businesses and individuals whose data, financial accounts, hospital records, and government services run on software that Mythos has already scanned for vulnerabilities: the relevant question is not whether Washington and Beijing will agree on AI governance, but whether the organizations holding that data are among the 40 that got early access to defensive tools — or the majority that did not.

McGuire's bottom line, delivered at the CFR post-summit briefing, was direct: any US-China AI safety dialogue must be "narrowly focused on AI safety issues" and coupled simultaneously with export controls that preserve the American lead. The alternative, he argued, is either trusting Beijing's good faith or waiting for a catastrophe to change its calculus. The talks that may or may not follow the Beijing summit will test which of those three paths the Trump administration actually chooses.

ⓒ 2026 TECHTIMES.com All rights reserved. Do not reproduce without permission.